A seamless blending of the real and virtual worlds is key to increased immersion and improved user experiences for augmented reality (AR). Photorealistic and non-photorealistic rendering (NPR) are two ways to achieve this goal. Non-photorealistic rendering creates an abstract version of both the real and virtual world by stylization to make them indistinguishable.

Simple NRP techniques, that use information from a single rendered image, have been demonstrated in real-time AR systems. More complex NRP techniques require visual coherence across multiple frames of video, and typical offline algorithms are expensive and/or require global knowledge of the video sequence. To use such techniques in real-time AR, fast algorithms must be developed that do not require information past the currently rendered frame.

We explored several projects in NPR AR in this page, including watercolor-inspired rendering and painterly rendering effects for AR, in both image and model space.

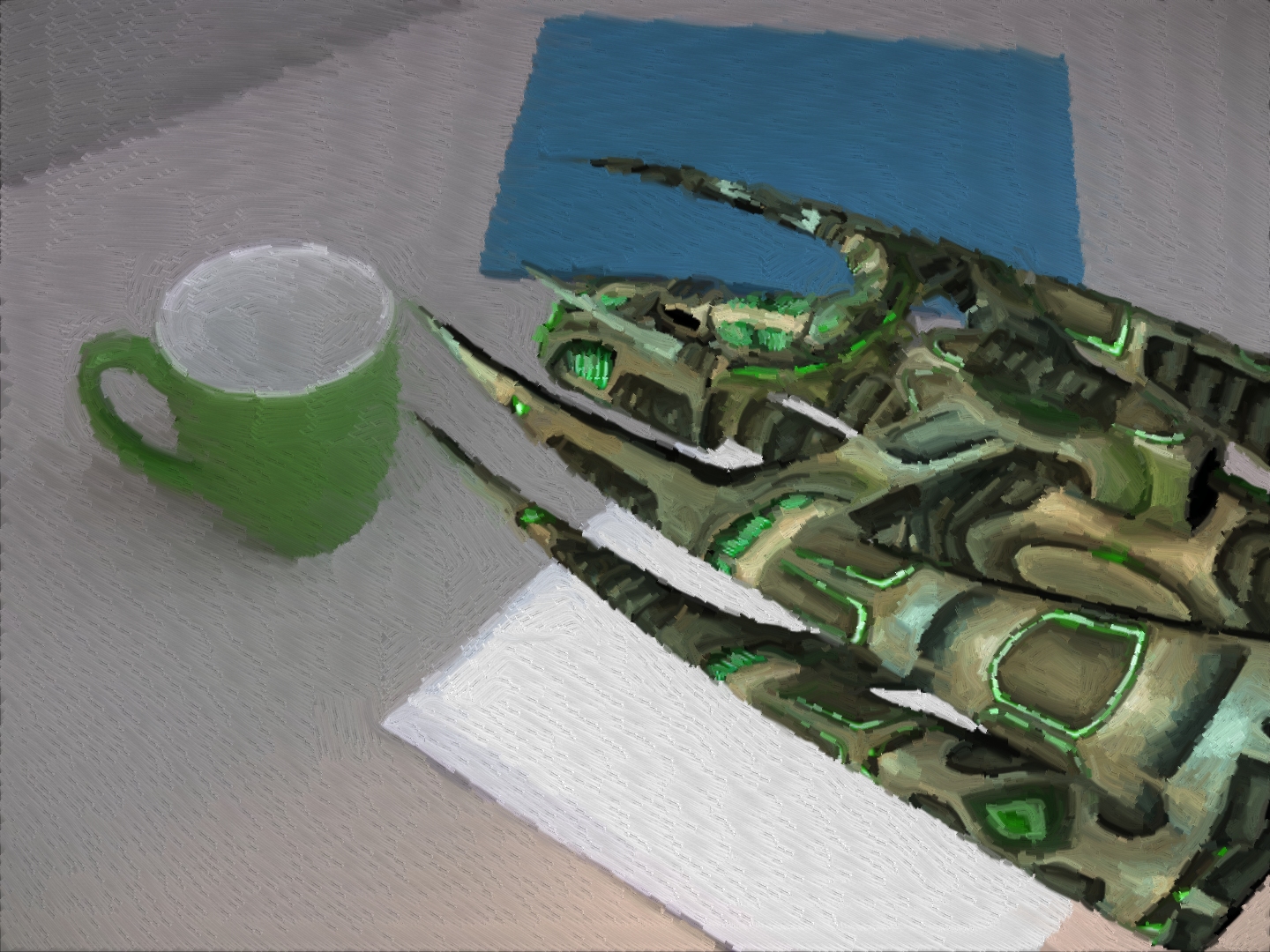

[Project1] Watercolor-Inspired NPR for AR

In this project we present a watercolor-like NPR style for AR applications with some degree of visual coherence. AR frames are tiled with a Voronoi template. In each frame we re-generate the Voronoi template by re-tiling Voronoi cells along strong edges in the frame to achieve visual coherence.

A virtual dragon with real background [VRST’08]

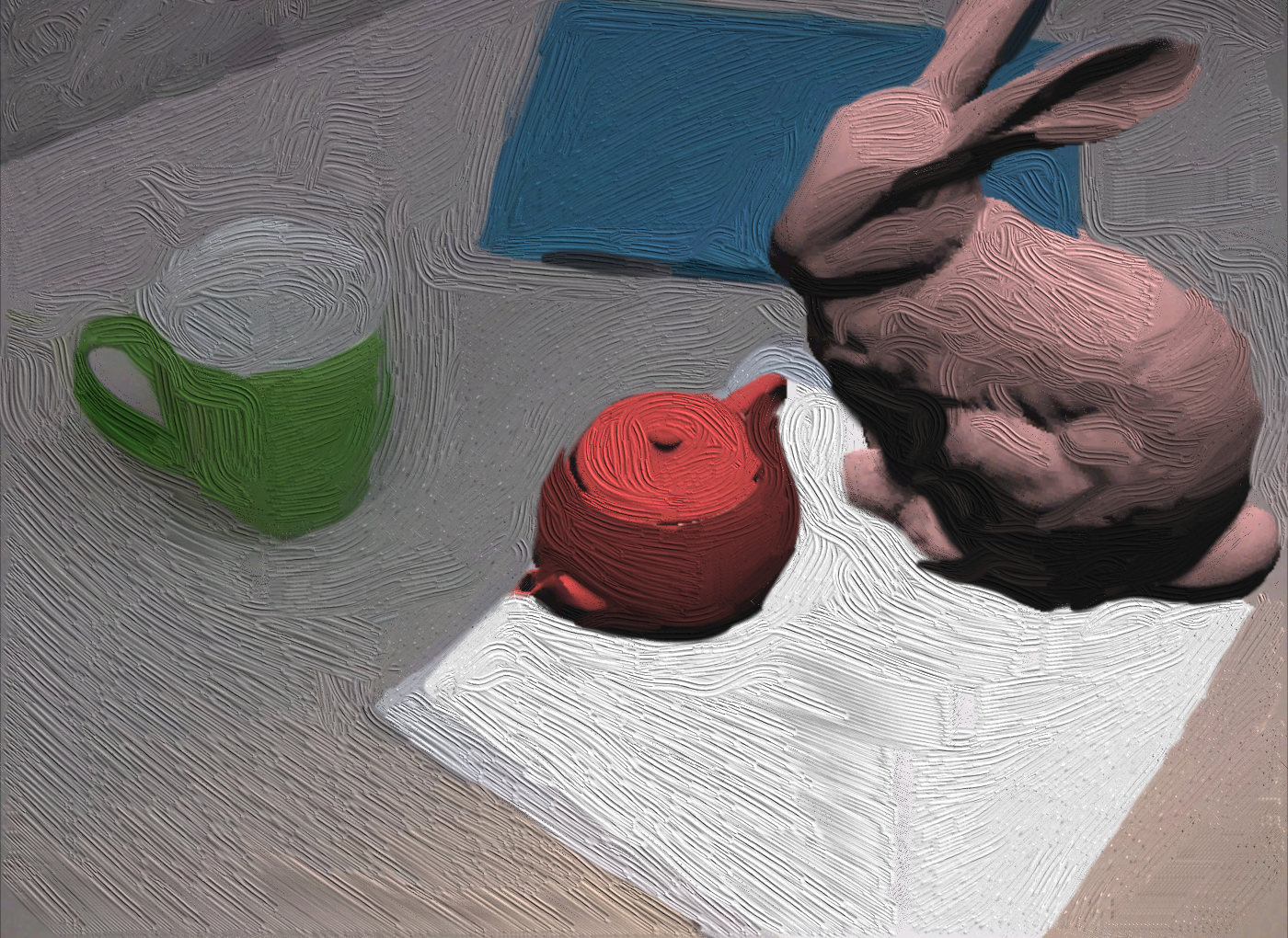

[Project 2] Painterly Rendering with Coherence for AR in the Image Space

We present a painterly rendering algorithm for AR applications. This algorithm paints composed AR video frames with bump-mapping curly brushstrokes. Tensor fields are created for each frame to define the direction for the brushstrokes. We use tensor field pyramids to interpolate sparse tensor field values over the frame to avoid the numeric problems caused by global radial basis interpolation in existing algorithms. Due to the characteristics of AR applications we use only information from the current frame and previous frame to provide temporal coherence in two ways for the painted video. First, brushstroke anchors are warped from the previous frame to the current frame based on their neighbor feature points. Second, brushstroke appearances are reshaped by blending two parameterized brushstrokes to achieve better temporal coherence. The major difference between our algorithm and existing NPR work in general graphics and AR/VR areas is that we use feature points across AR frames to maintain coherence in the rendering. The use of tensor field pyramids and extra properties of brushstrokes, such as cut-off angles, are also novel features that extend exiting NPR algorithms.

A virtual bunny and teapot on real background. [ISMAR’10 Poster and ISVRI’11 paper]

[Project 3] A Coherent NPR Framework for AR in the Model Space

We explore the non-photorealistic rendering algorithms with coherence support for AR. We propose a general rendering framework that provides better coherence for creating NPR effects for AR applications. We demonstrate our work to create painterly rendering effect for AR. To obtain better visual coherence we spread 3D anchor points on model surfaces, and update the anchor list from frame to frame to maintain an even density on 2D screen. We also propose to compute the average of multiple brushstrokes in history list at each anchor to further improve coherence.

A virtual alien spaceship hovering on a real table [submission in preparation]

Recent Comments