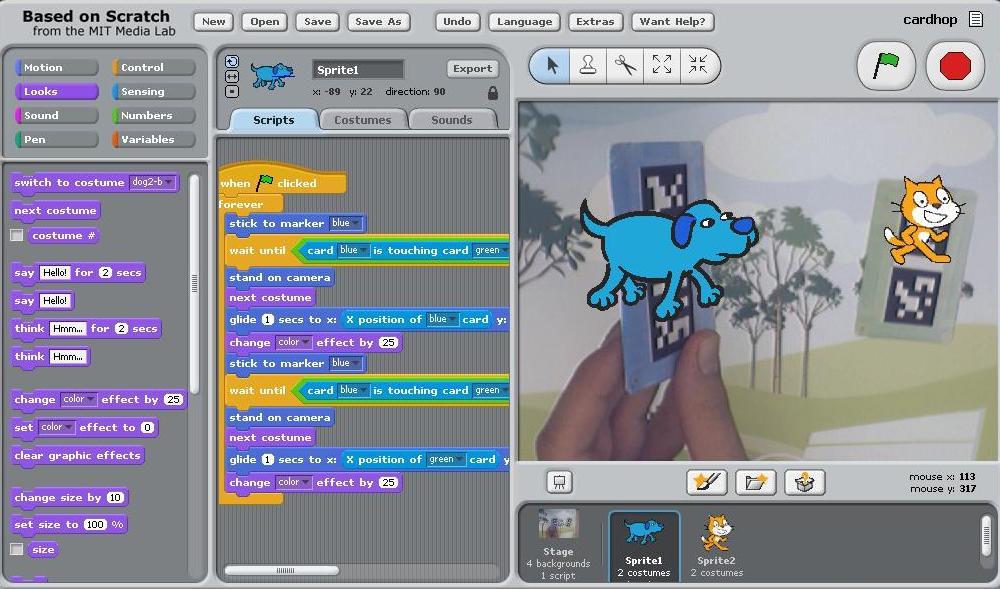

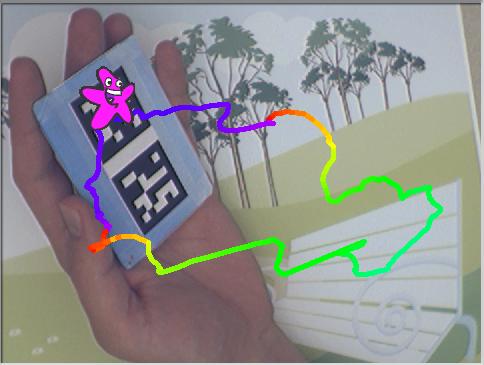

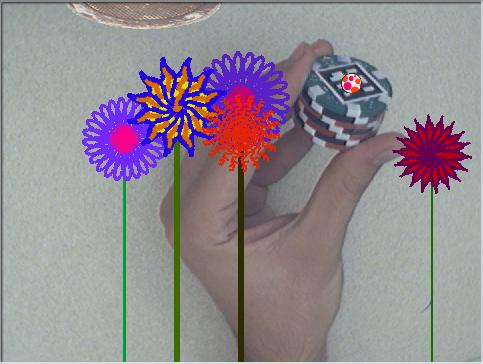

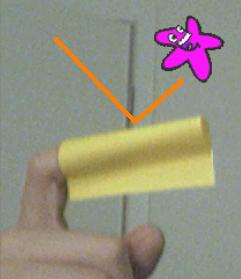

AR SPOT is an augmented-reality authoring environment for children. An extension of MIT’s Scratch project, this environment allows children to create experiences that mix real and virtual elements. Children can display virtual objects on a real-world scene observed through a video camera, and they can control the virtual world through interactions between physical objects. This project aims to expand the range of creative experiences for young authors, by presenting AR technology in ways appropriate for this audience. In this process, we investigate how young children conceptualize augmented reality experiences, and shape the authoring environment according to this knowledge.

Download (Windows only)

http://ael.gatech.edu/arspot/arSpotDistribution_2012Mar.zip

- Download the ZIP file, and unpack it.

- In the SpotDocumentation folder, you will find a file called “SPOT Cards”. This contains the tracker cards on the first page. Print these cards on a letter-size page.

- Ensure that a video camera is connected to your computer.

- In the SPOT folder, execute the “RunSpot.BAT” file.

- For more information, see the “SPOT Documentation” file in the SpotDocumentation folder.

AR SPOT Details

The source code for Scratch was modified to include a camera feed, and novel functions were added to the library of programming blocks. The video feed is processed by an external DLL which detects and tracks special objects in the physical environment. Information about the physical objects is then accessible through the programming blocks.

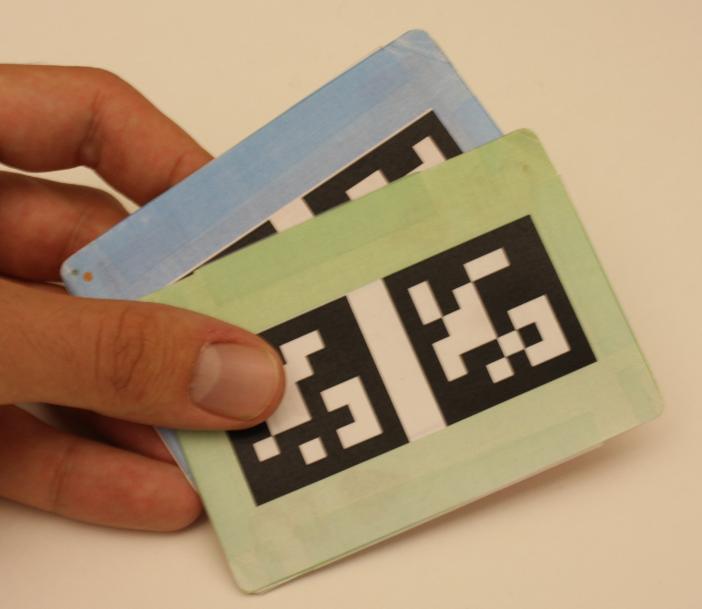

Users can interact through two types of objects: cards and knobs. The system tracks the square marker patterns in order to detect position and orientation of the objects.

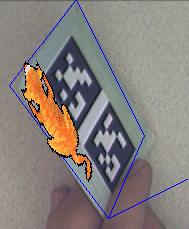

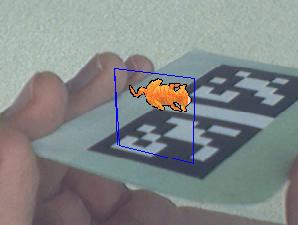

There are three ways in which actors can be rendered in the physical scene. One of these preserves the 2D dimensionality of the existing system, by having actors simply follow the position of the object on the screen (if physical objects are moved, the actors match the object 2D position on the screen; however, the actors do not change size or orientation if the objects are distanced or rotated). In two other cases, the actors become more 3D – they can lay or stand on the physical objects, and their appearance changes to follow the 3D location of the object.

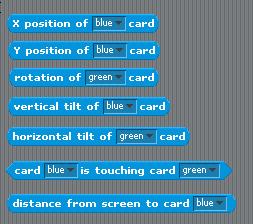

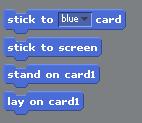

New blocks were added to the programming library to make these interactions possible. Motion blocks were added to tell sprites to stick/unstick to the physical objects. Sensing blocks were added for detecting object properties such as distance, rotation, touch, etc. New events were also added which signal when objects become visible or touch.

Future Outlook

This environment should entice more children to create computer-based experiences, at the same time as it generates a lot of data about what mappings are intuitive between physical and virtual worlds. By leveraging the real world, mixed-reality technology can make it easier for children to explore the capabilities of virtual environments. This is because physically interacting with virtual environments may be more intuitive than through a typical keyboard/mouse interface. In crafting projects through this environment, Scratch programmers will generate mappings between tangible interactions and behaviors of programs; thus, a set of frequently-used natural mappings will emerge from the user community. Researchers may also create applications which make use of novel interaction techniques, and use the community to determine if and how children grasp these concepts. In this sense, we expect that the community will become a research partner for tangible interface research.

Resources

Download AR SPOT (Windows only, 50MB)

Video showing demo examples (VMW, 15.6 MB)

AR SPOT publication at the Interaction Design & Children 2009 conference (IDC’09)

19 comments

2 pings

Skip to comment form

Wonderful news.

Will this function when a game/project is posted on scatch.mit.edu?

Author

This is not directly connected to the scratch.mit.edu projects site, but you may be able to manually import existing projects into the Spot system.

But, the version of Scratch on the MIT site is newer than what we used in Spot, so some blocks may be different and there may be compatibility issues when using existing projects.

We are happy to share the source code, if you want to upgrade it.

Any chance of a Mac version coming out? My kiddos would LOVE to play with this as they are familiar with Scratch and enjoy it so!

Thanks for sharing. This is so cool. Do you know if there are plans to extend it to Mac osx?

Author

We are not currently planning to extend it to Mac, but are happy to share the code with anyone interested in doing so.

Is it possible to link an image to a custom spot card? If so, how? Any help would greatly be appreciated!

Author

I don’t understand your question – could you explain more what you mean ?

Thank you for your prompt response…

I want to create a custom spot card.

So for example if I want to have a picture of a “dog” can I make a spot card with a picture of a dog on it and print it out.

Then how do I code the card to match the picture of hte dog on the screen.

All I see in the program is stick to “green” or “blue”….can I make a new variable stick to “dog” for example? And program this to match my custom spot card that I created?

I apologize for the questions, as I am not the greatest of programmers and I want to learn this so I can teach students at the school I work at.

Thank you.

Karim

Author

Hi,

Right now, you cannot create a whole new card, sorry.

You can make a new card from an old card – for example, you can take the black barcode from the “blue” card, and put it on a picture of a dog. The software will detect the dog, because the software detects the barcode. However, the dog will be called “blue” in the Scratch program (eg: you will need to use the ‘stick to “blue” card’ block). This might not be a problem if you make the dog blue.. but unfortunately you cannot rename the card.

If you know how to program, you can take a look at the source code for SPOT, and rename the cards yourself. I believe it is fairly easy – let me know if you are interested.

I wouldn’t mind taking a look at the coding if you don’t mind sending it to me!

Thanks 🙂

Hello Iulian,

Is there any possibility to import a sketchup project into ArSpot?

Regards

Author

Sorry, no. Right now, ArSpot doesn’t support integration with Google Sketchup.

And there are plans for that?

Hi Iulian,

Can we use different kind of pattern card or only the cards show in the sample? Can we use 3D models (.OBJ or .3DS)? The manual teach how to use all blocks?

Congratulations for your software.

Regards,

Marcio

Hello Mr. Radu, your software looks great, but as im currently running android, I can’t get it, however I was hoping that since you seem to be on the front og the A.R. tech wave that you could point me to someone who could help me find a program that will allow me to perform vertual design physically, with holographic structure in Augmented Reality virtual space, I’ve been looking for one for some time now though, so it may not exist yet… but it is an idea at least of much potential.

Can anyone help me? Please, and Thanks to all.

Hi, I’m a 5th grade teacher in Alpharetta. How might I arrange for myself and another teacher to visit and see what you have been developing for children and education first hand?

Hi Kathy,

I just noticed this comment, can you drop me an email and we can chat directly?

Hi Lulian,

Congrats on developing ArSpot! My son loves it! The whole AR part is so fascinating and has a great potential to make programming so much more interesting to learn for kids. I’m trying to explore further the possibility of using Scratch, ArSpot and Enchanting to teach programming to Elementary school kids.

I mainly develop on Linux (Centos) and was wondering if you could share the source, so that I could attempt to port it to Linux.

Cheers,

-atin

Running Scratch 1.2.1 from the download file called arSpotDistribution_2012Mar. Getting error every time while try to change the background.

Sorry but the VM has crashed.

Exception code. C000005

Exception address: 00416990

Current byte code: 44

Primitive index: 0

It creates a dump file every time it crashed.

Please help

[…] https://github.blairmacintyre.me/site-archive/ael-2015/research/arspot/ […]

[…] on ael.gatech.edu Share this:TwitterFacebookPinterestLinkedInTumblrStumbleUponDiggRedditEmailPrintLike this:LikeBe […]